Arnaud said it during an internal meeting last week. We were reviewing a deliverable, talking about quality tolerances, the gap between what looks right and what is right. He wasn’t making a point. He was thinking out loud.

“The imperfection that creates perfection.”

I wrote it down. Not because it was clever. Because it described something I’d been circling around for months without finding the right frame.

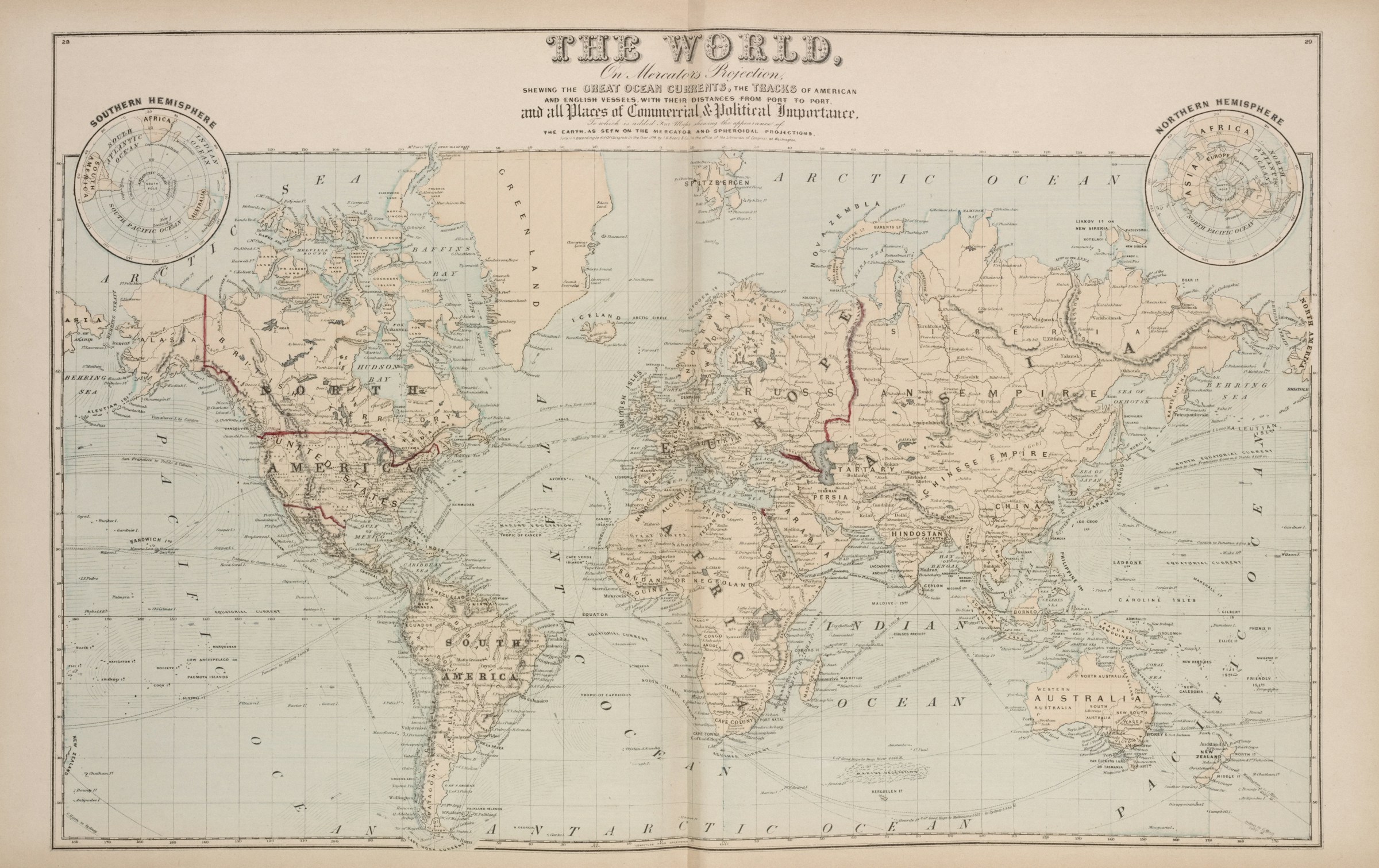

In Wetzlar, Germany, Leica assembles every lens by hand. Not as a marketing claim. As a manufacturing constraint. The tolerances involved in high-end optical engineering mean that two lenses of the exact same model, built on the same line, by the same technicians, will not produce exactly the same image. Photographers know this. They call it the “Leica rendering.” An organic quality to the image. Slightly warm, slightly imperfect, immediately recognizable. Not a flaw. A signature.

No image correction algorithm reproduces it. Because it isn’t a setting. It’s the accumulated result of specific imperfections in that specific lens, ground by that specific hand, on that specific day.

What the algorithm corrects away

Take the same scene. Same light, same frame. Shoot it with an iPhone and with a Leica M.

The iPhone gives you a perfect image. Sharp everywhere. Colors corrected. Sky enhanced. Skin smoothed. Fourteen algorithms decided for you what you wanted to see. The result is technically excellent. It is also functionally identical to the image anyone else would have taken with the same phone, in the same conditions.

The Leica gives you your image. With the grain of that moment. The sharpness of that particular lens, not another. The depth of field you chose, not the one a computational model calculated as optimal.

One produces consensus. The other produces point of view.

I keep thinking about this when a CEO shows me an IS audit he received from a previous provider. Clean. Complete. Twenty pages. All the right sections. All the expected recommendations. And this strange feeling that it could be the audit of any company of the same size, in the same sector.

The audit is technically correct. The recommendations are defensible. Nothing is wrong. But nothing is specifically right either. The report describes an archetype, not an organization. The algorithmic correction smoothed away everything that made this company’s situation unique.

The photographer William Eggleston once said something about the democratic forest: everything in the frame has the same value. The gas station and the cathedral get the same attention. That’s what a good diagnostic does. It doesn’t privilege what the framework says should be important. It reads the whole frame with equal attention and lets the actual situation determine what matters.

Most IS audits do the opposite. They arrive with the framework already loaded. The equivalent of an iPhone’s computational photography: before the shutter clicks, the software has already decided what the image should look like.

The photocopy bias

In 1928, Alexander Fleming left a petri dish open by mistake before going on holiday. A mold contaminated the bacterial culture. The bacteria around the mold died. Any rigorous lab technician would have discarded the contaminated dish, sterilized the bench, and started the experiment over.

Fleming looked at it.

What followed was penicillin. Millions of lives saved. Born from an error that any automated protocol would have corrected before it became visible.

The discovery wasn’t the accident. The discovery was the gaze. Someone who saw in the anomaly something other than a problem to fix.

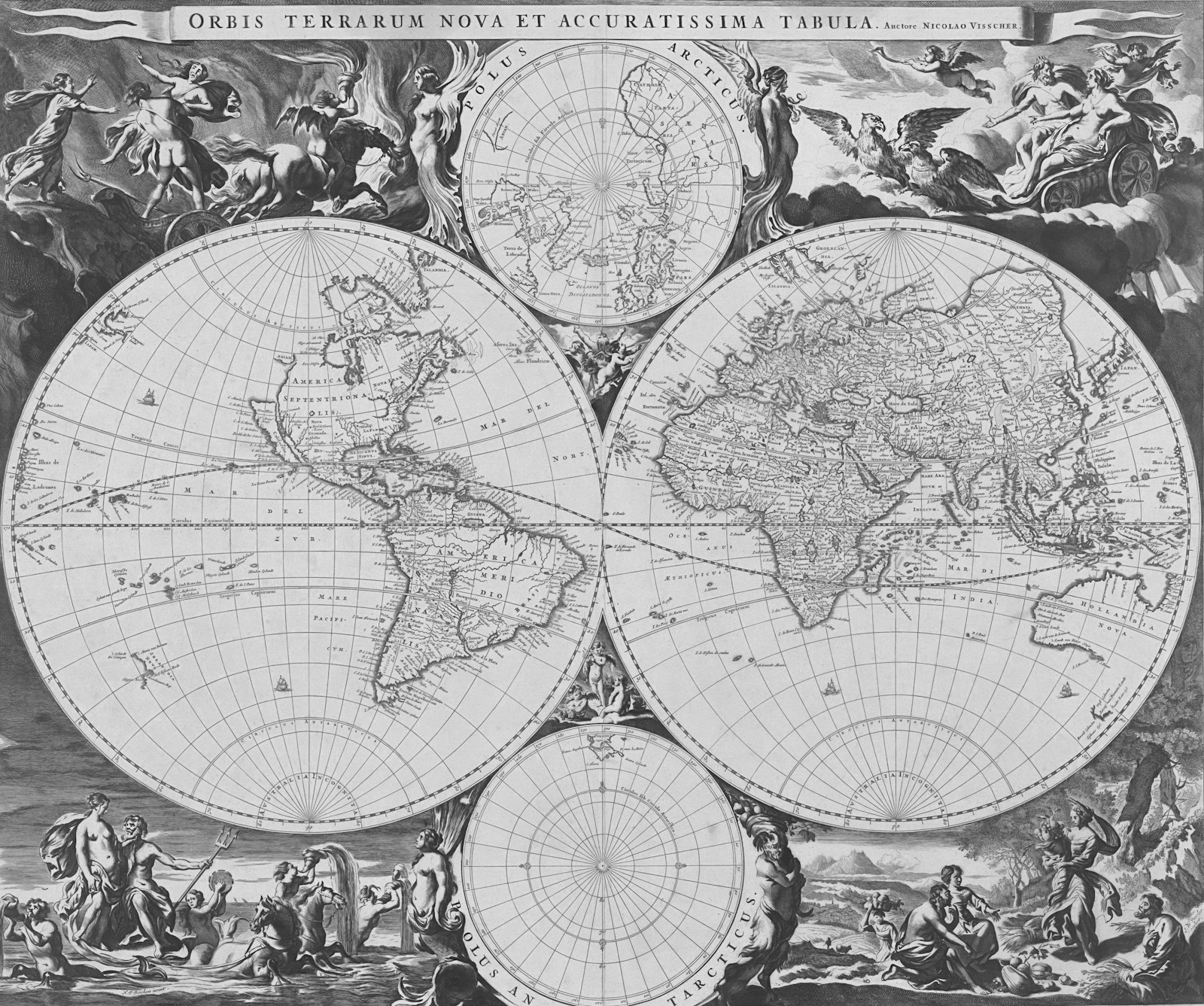

I think about Fleming when I watch what’s happening with generative AI and the knowledge cycle. Models trained on content. That produce content. Ingested by other models. That produce more content. Each iteration smooths the outliers. Eliminates the accidents. The distribution tightens around an artificial center of gravity that nobody chose.

This is the photocopy bias. Copy a text. Copy the copy. Copy that copy. After twenty iterations, the fine details are gone. What remains is legible, but it’s no longer the original text. The signal-to-noise ratio didn’t improve. The signal converged toward noise.

In IS diagnostics, I see it emerging. Audits starting to look alike. Not because organizations look alike. Because the tools look alike. Same analysis frameworks. Same pattern libraries. Same standard recommendations. “Refactor your technical debt.” “Migrate to the cloud.” “Implement data governance.”

Fine. But the technical debt in this particular organization exists because a CTO who left three years ago made a political decision disguised as a technical one, and nobody has dared to revisit it since. The real question isn’t in the code. It’s in the org chart. In a meeting that never happened.

No model trained on ten thousand cases sees that. It’s too local. Too contextual. Too human. The algorithm corrected it away.

What this looks like in an IS audit

I was called into a mid-sized industrial company last year. A hundred and twenty people. Three different providers had audited their IS in two years. Each one recommended refactoring the in-house ERP. Each report was thorough, well-structured, defensible. Each one arrived at the same conclusion through the same analytical path.

The CEO was hesitant to start a fourth project. Not because he doubted the diagnosis. Because something felt off and he couldn’t name what.

We spent two days on site. Not in the code. In the corridors. With the teams. The ERP worked. Badly documented, fragile in places, but it ran. The real issue was an architecture decision taken in 2021 by a CTO who had left the company six months after making it. A political choice dressed as a technical one. Everyone knew. Nobody had written it down.

The three previous audits had all seen the symptoms. Fragile ERP, inconsistent architecture, slow deployments. They’d all applied the standard pattern: the system is old, refactor it. None of them had asked why the system looked the way it did. Because the answer wasn’t in the system. It was in the history of the organization, in a decision that lived in the memory of three people who were still there but had never been asked.

We didn’t recommend refactoring. We recommended a conversation. Between the CEO, his operations director, and the dev team. Three hours in a room. With the real question on the table: do we own this inheritance and build on it, or do we start over, but knowing why.

Six months later, the system is running on the same base. Stabilized. Documented. The cost of a migration that didn’t need to happen was saved entirely.

That’s the Leica eye. Not sharper than the algorithm. Differently focused. Tuned to what makes this particular situation unlike any other, even when the surface pattern says otherwise.

The three previous audits weren’t bad. They were generic. They did exactly what they were designed to do: apply a proven framework to an observable situation and produce a defensible recommendation. The problem is that defensible and correct are not the same thing. A recommendation can be perfectly reasonable and entirely wrong for this specific organization, at this specific moment, given this specific history.

The Leica doesn’t take better photos than the iPhone. It takes different ones. Ones where the photographer’s judgment is the primary variable, not the algorithm’s optimization target.

The tool versus the oracle

I should be direct about something before anyone reads this as an anti-AI argument. I use AI tools. Every day. For synthesis, for modeling, for accelerating the parts of the work where speed doesn’t compromise quality. The carpenter who uses a power drill doesn’t apologize for not drilling by hand.

But the carpenter doesn’t ask the drill where to put the hole.

The distinction matters. AI as a tool in the hands of a practitioner is formidable. AI as an oracle replacing the practitioner is something else entirely. It confuses computational power with contextual understanding.

When I build a diagnostic for a client, AI helps me process information. It doesn’t help me sense that the CIO and the CFO haven’t spoken in six months. That the cloud migration was sold by a salesperson before an architect validated it. That the real problem isn’t the legacy system but the fear of touching something that still works.

That comes from accumulated experience. Hundreds of situations read, reread, sometimes gotten wrong. A filter that nobody could train because the data doesn’t exist anywhere. It lives in the meeting room. In the unspoken. In the look on the CEO’s face when someone says the word “overhaul.”

An iPhone gives you a perfect photo. A Leica gives you one that only you could have taken.

The question for the person commissioning the audit isn’t which tool was used. It’s whether the person behind it was looking through a calibrated lens or just pointing and shooting.

There’s a reason Leica still assembles lenses by hand in 2026, while every economic incentive in the world pushes toward automation. Some things cannot be produced by optimizing for the average. The specific, the contextual, the particular: these require a human hand that has done this enough times to know exactly why this time is different.

Your IS doesn’t need a better algorithm. It needs someone who has looked through enough lenses to know which one fits.

The view from outside the cockpit that the driver structurally cannot have is The Pit Wall and the Cockpit. The IS equivalent of a building where nobody has looked inside the walls is Looking Inside the Walls.